In a landscape where diagnostic speed is as vital as accuracy, and administrative burnout is just as much of a threat as a clinical error, AI diagnostics are both hailed and scrutinised by many. One of the most recognised experts on Bayesian networks and explainable machine learning, Ing. Jiří Vomlel, Ph.D., from the Czech Academy of Sciences, provides a sobering look at the perks and limitations of Explainable AI (XAI) – casting it not just as a tool for causal understanding, but as the blueprint for truly personalised care.

What is Explainable AI (XAI), and why is it crucial for healthcare?

Explainable AI refers to systems designed so that their actions and decisions can be readily understood by humans. In medicine this is critical because many AI models act as black boxes; providing a diagnosis or recommendation without explaining why. For a doctor to trust and act on an AI’s suggestion, they must understand the underlying logic: to confirm the decision is grounded in medically sound data rather than a technical artefact or data bias. Explainability is also a regulatory and ethical requirement: under frameworks such as the EU AI Act, high-risk AI applications in healthcare must offer meaningful transparency to clinicians and patients alike.

If you look at today’s medical landscape, in what specific fields is AI currently showing the most significant results?

AI is most advanced in medical imaging and diagnostics, particularly in radiology, dermatology, and oncology. Because AI algorithms can be trained on millions of images, they can often detect patterns – such as early-stage tumors or skin cancer – that might be invisible to the human eye. AI is also increasingly used in drug discovery to predict how different chemical compounds will interact with the body, significantly speeding up the development of new medicines.

On the contrary, what are its limitations? For instance, AI learns from historical patient data. What are the risks of confusing correlation with causation?

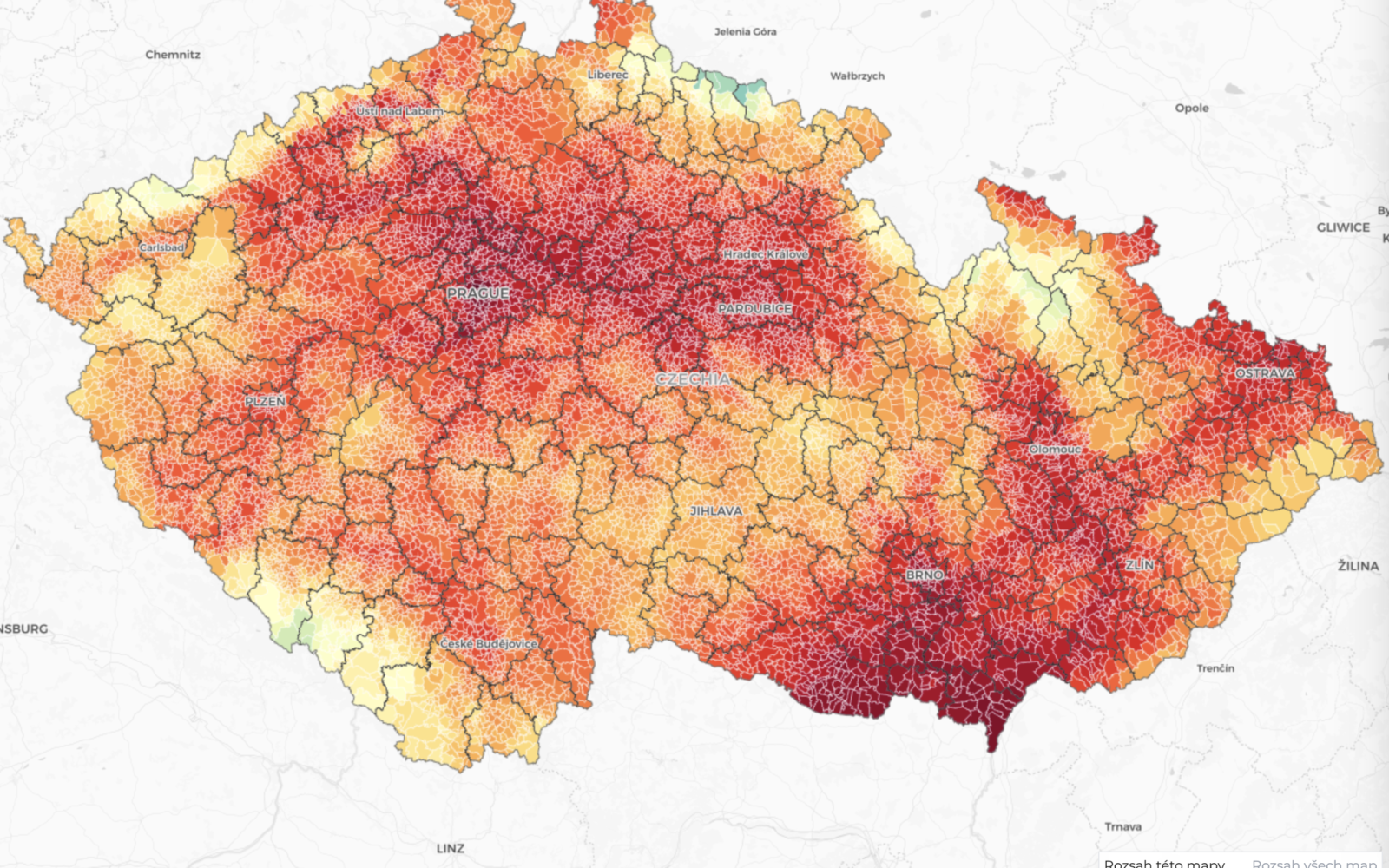

This is one of the most important conceptual limitations of current medical AI. A model trained on health records may identify a statistical association; for example, that a certain imaging pattern co-occurs with a diagnosis – without understanding why. If that correlation is driven by a confounding factor (such as patient age, ethnicity, hospital equipment, or data collection practices), the model may generalise incorrectly to new populations or

clinical settings. When large and anonymised datasets are used for training, such biases can be encoded silently and at scale. A pattern that works in one dataset is not evidence of a causal mechanism. It’s a hypothesis. This is why AI-derived insights should always be subjected to prospective clinical validation before informing treatment decisions, and why diverse, representative training data is not a nice-to-have but a safety requirement.

When it comes to the diagnostic process, how can AI improve efficiency and support more personalised care?

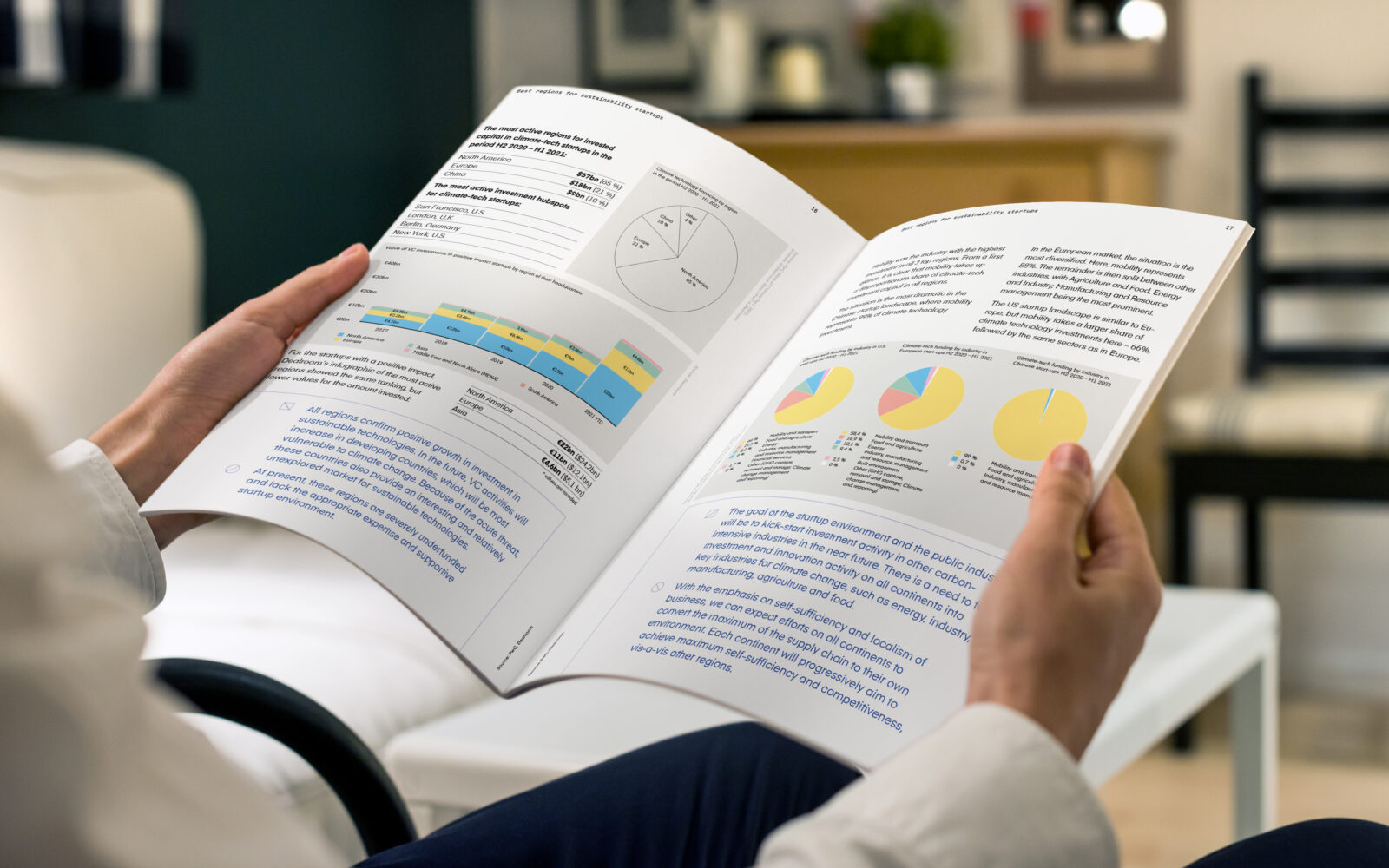

AI offers efficiency gains at multiple stages of the diagnostic pathway. In imaging, concrete examples include cutting MRI examination times roughly by half and reducing radiation exposure in CT scans. These outcomes benefit both patients and throughput. In oncology and genomics, AI can integrate imaging, laboratory, and genetic data to suggest individualised treatment plans – an approach commonly called precision medicine. By processing and synthesising far more variables than a clinician could hold in mind simultaneously, AI makes genuinely personalised care more tractable at scale.

Your own research area includes adaptive testing in psychometrics. Does that field offer any useful perspective on how AI could improve diagnostic efficiency in medicine?

The parallel is surprisingly close. In computer-adaptive testing, the system selects the next question in real time based on the responses already given, the goal being to reach a reliable estimate of the respondent’s ability in as few steps as possible, rather than administering the same fixed battery to everyone. An AI-driven diagnostic pathway can work on the same logic: dynamically prioritising which test, scan, or question to request next based on findings already available, converging on a diagnosis efficiently rather than following a predetermined protocol regardless of what has emerged so far. Both approaches are based on the same fundamental principle – using the information you have available to decide what information you still need – and both replace a universal approach with one that responds to individual needs. The practical implication for healthcare is significant: not only could such systems reduce unnecessary investigations, but they could also shorten the time to diagnosis for patients whose presentation does not fit the standard pathway.

And beyond diagnosis – how can AI improve the daily workload of healthcare professionals?

AI can significantly reduce the administrative burden on doctors and nurses. It can handle automated documentation: for example, converting spoken consultations into structured clinical notes, managing appointment scheduling, and assisting with triage in emergency settings by prioritising cases based on urgency signals. By absorbing these repetitive, time-intensive tasks, AI allows healthcare professionals to redirect their attention toward complex clinical reasoning and direct patient interaction: the aspects of care that genuinely require human judgment and empathy.